- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Picture text not delivered android 6-0-1 note 4

- Install ipython all

- Diablo 3 battle chest no key

- God of war 4 psp iso

- Sims 3 generation trailer song

- The binding of isaac wrath of the lamb

- Simcity 4 mac cheats

- Fifa 16 trading method

- 3d home architect deluxe 4-0 free download

- Frm study material free download pdf 2018

- Download dynasty warrior 7 pc highly compressed

- Max payne 3 ps3 turn off light

- Nobody wanna see us together akon ft

- I am da one star wars

- Serial to spi programmer

- Altera usb blaster driver vista

- Download game pizza frenzy

- Adobe illustrator cs5 update

- Tekken advance conbos

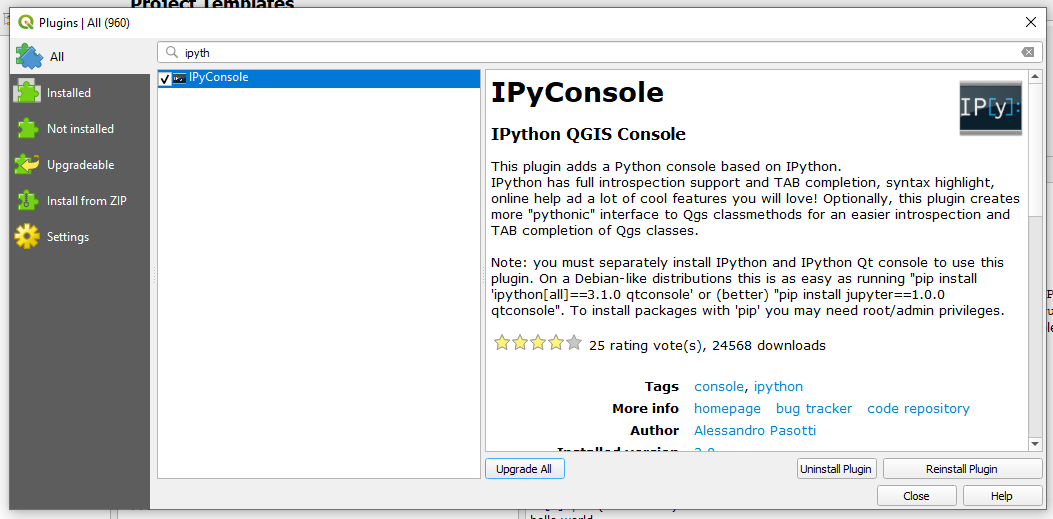

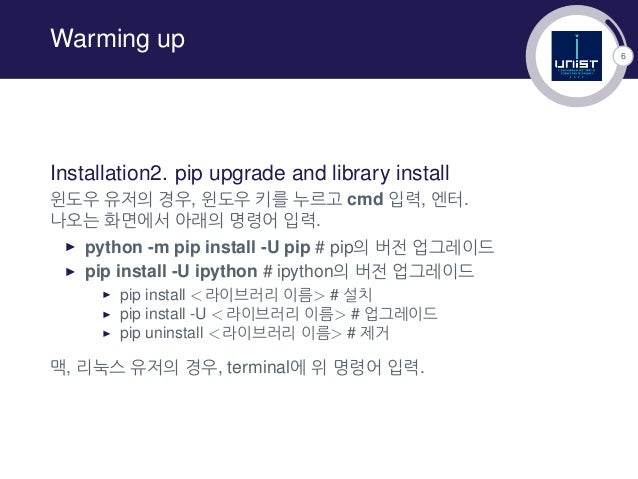

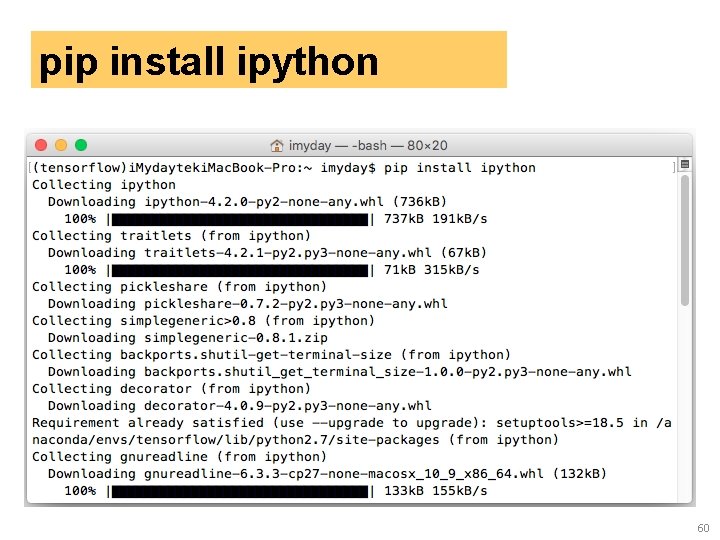

#INSTALL IPYTHON ALL INSTALL#

And after some search, I noticed cloudera has yum installed, and yum can install pip: But by default, pip is not installed in cloudera VM, and pip can not be installed by eacy_install as well. Detailed instructions on getting ipython-notebook set up or installed: Please Note: iPython Notebook is now no longer supported as all. The solution for this is to use pip to install ipython. And will get the error :Įrror: Could not find suitable distribution for Requirement.parse('ipython=1.2.1') It also includes a good lightweight IDE called Spyder that can. In all the old Cloudera VM guide, it use easy_install to install ipython, but it does not work in Virtualbox 5.2.0 r118431 and later. WinPython includes IPython Notebook and qtconsole as well as all the dependencies for IPython. Put everythin in a nice caseįinally we put everything into a nice case, ensure the power suppy and attach everything to a switch using the following parts: The final mini cluster.I am practicing pyspark in Cloudera VM, and pyspack need be launched by ipython.

#INSTALL IPYTHON ALL DOWNLOAD#

Well, if you download the source distribution and just run. Su - hduser -c "/opt/apache-hive-2.1.1-bin/bin/hiveserver2 &"īefore exit 0. The fourth option is to use IPython without installing it at all. Su - hduser -c "/opt/hadoop-2.7.3/sbin/start-dfs.sh &" Su - hduser -c "ipython notebook -profile=pyuser &" Switch to su orangepi user and edit sudo nano /etc/rc.local and instert ThenĪnd in $HADOOP_HOME/etc/hadoop/ we have to in core-site.xml we have to add To connect Ipyhton and hive as orangepi we fist neeed to install the python package manager p ip with sudo apt-get install python-pip python-dev build-essential. That’s it Hive should now be installed, we can now chekc the installation with Temporary local directory for added resources in the remote file system. Location of Hive run time structured log file Finally we need to make sudo cp hive-default.xml and make some changes such as replacing $ with $HIVE_HOME/iotmp such that it looks like this (hint: use STRG+W to find the locations) In the next step we have to create the metastore with schematool -initSchema -dbType derby. Unfortunately, if we install IPython through P圜harm's package installer, all the requirements will not be installed. $HADOOP_HOME/bin/hadoop fs -chmod g+w /user/hive/warehouse. $HADOOP_HOME/bin/hadoop fs -chmod g+w /tmp $HADOOP_HOME/bin/hadoop fs -mkdir /user/hive/warehouse $HADOOP_HOME/bin/hadoop fs -mkdir /user/hive Finally we need to to create the /tmp folder and a separate Hive folder in HDFS with Got to cd $HIVE_HOME/conf and rename as orangepi user the config file sudo cp hive-env.sh.template hive-env.sh and insert sudo nano hive-env.shthe location of hadoop export HADOOP_HOME=/opt/hadoop-2.7.3. To set the enviroment variables switch to the hduser and open nano ~/.bashrc, then addĮxport HIVE_HOME=/opt/apache-hive-2.1.1-binĮxport PATH=:$HIVE_HOME/bin

As usual we extract the file with sudo tar -xvzf hive-2.1.1/apache-hive-2.1. and change the permissions with sudo chown -R hduser:hadoop apache-hive-2.1.1-bin/. To install Hive we first need to download the package as orangepi user in the /opt/ directory with $sudo wget $ (look here for the latest release). To start the ipython notebook execute ipython notebook -profile=pyuser. To be able to create nice plots we will also install matplotlib via sudo apt-get install python-matplotlib.Switch to the hduser and make a new ipython user with ipython profile create pyuser open the config file nano /home/hduser/.ipython/profile_pyuser/ipython_config.py and addĮstablish the connection to Spark at startup with nano /home/hduser/.ipython/profile_pyuser/startup/00-pyuser-setup.py and add

#INSTALL IPYTHON ALL UPDATE#

2.1 Install IpythonĪs orangepi user install ipython with sudo apt-get update and sudo apt-get install ipython ipython-notebook.

In constrast to Spark or Hadoop it is only required to install the stuff on the mainnode and not at all cluster nodes. In this section we will install some stuff which will make life easier.